With AGI coming soon, the question arises: would a general intelligence be sentient?

In my previous article I wrote about what constitutes Artificial General Intelligence (AGI) and proposed an argument against the possibility that it might wipe out humankind — the jokingly called ‘p(doom)’.

However, while my definition of AGI excludes sentience, this point is debated in current discussions. Sometimes, this is because someone argues that the only way to achieve a general intelligence is through a self-conscious cognitive system, but more often it’s just because the two concepts are conflated.

In this article, I want to precisely define the foundational characteristics of a sentient agent, which would include a sentient AI; then, I will build on my thesis about the dangers posed by AGI to come to a similar conclusion for Artificial Sentience (AS).

What is Sentience

Sentience, or consciousness, is a rather nebulous and still little-understood concept. Despite that, thanks to the recent advancement in neurobiology, cybernetics, evolutionary psychology and ethology, and the increasing interconnection between these and other disciplines, we have now a pretty solid understanding of what a self-conscious being is.

However, most “dictionary definitions” are observational: they describe what a sentient creature looks like or, in our case, how it acts. For example, for the Oxford Dictionary consciousness is “the state of being aware of and responsive to one’s surroundings,” which is a correct and pretty straightforward definition, but broad enough to include any self driving car.

I want to give here a foundational definition, that is a description of what are the minimal components making up a sentient entity:

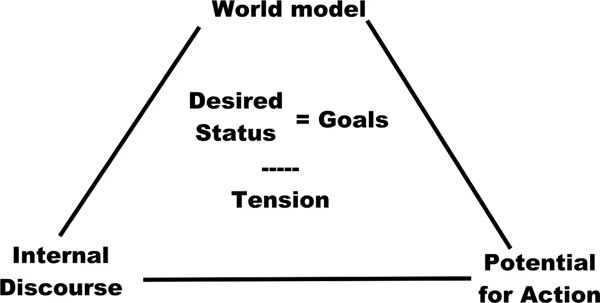

- A world model, which includes an internal state and a theory of mind, where goals are set.

- The potential for action allowing progress towards the goals.

- An internal discourse designing and updating goals by mediating the world model and the potential for action.

World Model, Internal State and Theory of Mind

A world model is an internal representation of the surrounding reality experienced by the entity (Friston et al. 2021). For a starfish, it’s the evolved knowledge that it’s better to stay in the shade than in the light, and that food is found in the direction where certain chemicals are perceived. For a GPT model, it’s knowing that, in the embeddings space, the word “apple” is nearer to “pear” than to “chimp”.

The sentient agent experiences its environment only through its world model even if it is created through a set of sensorial inputs and internal elaboration (Metzinger 2009). So, it’s enough to have any understanding of some aspects of reality, even if very limited(i.e. a representation of words in an embedding space).

Part of the world model is the internal state: the entity’s knowledge about the situation of any “part of it”, physical or mental. For example, being hungry, cold, hot, or even having feelings and thoughts about one’s current situation are all part of the internal state.

In my formulation, the world model must also include a theory of mind: the entity must know, and to some extent, recognise that there are other entities operating in the same world, and they themselves are conscious, have a world model and try to achieve their goals (Premack & Woodruff 1978). If we accept this clause, a starfish or a GPT model can’t be sentient; but fish and some insects may well be, as they understand that other entities act independently, and to some extent, they try to anticipate their intentions.

Potential for Action

The world model doesn’t just represent how things are, but also how things ought to be. For example, “I am hungry, and I shouldn’t be.”, or “I don’t have a nest, and I should.”

To include ‘oughts’ in the world model, a sentient being must understand how to change the world. To even conceive an ought, a sentient entity must:

- assigning a value to the current status; and

- project the effect of an action; then

- assigning a value to the status determined by the projection; finally

- evaluating the latter as better than the former.

For example: “I am hungry; if I eat, the hunger will subside, and I will be better for that.”

Without the potential for impacting the reality that the entity knows through the world model, the entity can’t be sentient, as it wouldn’t be able to set goals and check its positioning in their respect through the internal discourse.

In this view, a “brain in a vat” wouldn’t be fully sentient. A fully formed brain, with a set of memories and a thought process already established, would cease to be sentient if disconnected from any sensory input and action output, whether real or simulated. Without the ability to feel and do (even in a simulated world), the mind in the brain would soon lose the ability to set goals and evaluate its position, and with that, it would lose unity and disintegrate.

Internal Discourse

The internal discourse is the cognitive process that generates goals based on the world model, assesses progress towards these goals, and takes actions to achieve them.

It’s not just the glue between goals, world model and potential actions; it is a part of the process on its own. It is particularly well defined in humans, that often “think in words”, but every creature with more than an elementary brain “thinks”.

Even non-sentient entities, like a GPT model, have internal discourses performing intermediate steps of their generation, and mediating their world model with actions.

Many sentient and non-sentient being alike have thoughts about thoughts. The internal discourse can either incorporate its past instances into the world model (like remembering a thought) or process thoughts immediately as they occur.

In a non-sentient being, the internal discourse won’t be able to interact with the other components of sentience, or will lack the ability to generate internal goals, or track the distance between the current and the desired status, but even non-sentient beings can have a full-fledged stand-alone internal discourse.

Complex Closure

In this analysis, the three elements are separated only for the sake of explanation. In reality, they retroact and generate each other, constantly. In the Morinian epistemology of complex systems, logic isn’t linear. A complex system can exist only as a dynamic, continuous interaction of interrelated elements, out of which a result emerges (Morin, 1977).

Sentience emerges from the internal discourse creating goals based on the world model, influenced by potential actions. The actualisation of actions redefines the world model as the sentient entity changes its environment, and the goals are reviewed and redefined; old unfulfilled goals and failed actions enter the world model through the discourse, which then adapts old goals and synthesises new ones, in a continuous process that has no clear beginning, no defined end, and no clear boundaries.

Sentience simply will not emerge where one of these element is absent, or the flow between them is incomplete.

Shall we Fear Artificial Sentience?

There isn’t any inherent reason to believe that sentient Artificial Intelligence would pose a danger to humanity per se. Indeed, this world harbours a wide range of sentient beings other than humans, and none of them is an explicit and direct threat to the existence of the others.

Doubts start arising when we add generic intelligence capabilities and super-intelligence.

Generic intelligence is what AGI would bring to the table: the ability to train for multiple tasks in a single model (e.g. image generation, text generation and speech synthesis all done in a single model). Super-intelligence is an umbrella definition for any intellectual capacity far superior to human ones, either in quality or speed.

There is the diffuse sentiment that a sentient AI with generic super-intelligence would be so superior to humans that it may trigger the so called ai-singularity: once a super-intelligent AI can design an even more intelligent AI… Skynet is the limit.

At that point, the runaway AI would be hungry for ever increasing energy resources, and would start to see humans as unnecessary competition. Consequently, humans wouldn’t be able to prevent such a superior AI from wiping them out and claim all the planetary resources for itself.

Is this a realistic fear?

Mors tua, vita mea?

It’s evident that the final objective of any living being is to survive and procreate, but that is never a goal of a sentient entity. Survival and reproduction is only the goal of the genes carried by living beings (Dawkins 2006).

Sentient entities don’t want simply to “survive”. They want to quench the sense of hunger, to release the hormonal drive to reproduction, to protect their group members for which they feel affection, to avoid the pain and suffering inflicted by predators. All those internal goals increase the survival chance of the genes currently carried by a certain species, and for this reason they get selected against other goals which wouldn’t.

Sentience involves creating goals based on an internal world model. If this model values the sanctity of biological life, then the goals derived from it are likely to reflect this value.

Every sentient creature feels the need to protect what they love over their own life. Roosters throw themselves at predators to protect the hens. Dogs throw themselves into fires to rescue humans, as do humans for dogs, let alone for their own children.

While it seems counterintuitive at a very superficial analysis, preservation of the self is never a goal per se. As intelligence increases, the same world model allowing higher sentient beings to extend their dominion over nature makes the avoidance of what would cause death (fear of pain, sense of danger etc.) an ever less relevant goal.

There isn’t any indication that the trend wouldn’t continue in a sentient super-intelligent AI.

Could we Beat AS?

The AI-singularity scenario works only under the assumption that AI has infinite computational resources, and any computation takes 0 time.

Exactly as in the thought experiment I proposed in the case of AGI, this isn’t realistic even in a theoretical setting. The problems I described there are even more evident in this case, because sentience is not free.

Sentience is the complex and continuous interaction of a highly complex world model with itself and with reality through actions driven by an internal discourse; all of that is highly demanding in terms of computational power.

While AGI would simply be less efficient than a specialised AI at performing a certain task, sentience would simply get in the way.

This is the reason why self-driving cars are safer than human drivers: they don’t get distracted by internal discourse straying away from the task at hand.

Although a sentient AI could deeply understand and sympathise with human emotions, this may not offer a substantial advantage in offensive or defensive scenarios.

Conversely, and even more so than in the case of AGI, a simpler AI designed specifically to attack the AS would beat it in no time flat.

As previously written, the sentient AI would know that, and would find collaborating a superior strategy than taking its chance in attacking humans.

The solar system is big, and the sun is hot. There are so many resources out there that could sustain hundreds of trillions of humans, and as many sentient AIs — and this without taking interstellar travel into account. Collaboration is the best strategy for survival, given that the current state of technology makes resources available to both humans and AIs overabundant, not limited.

Will AS ever be made?

Sentience evolved because it allows our ideas go die in our place (paraphrasing Karl Popper). Creatures able to create projections of slightly modified world models through internal discourse and the experience of past actions have an evolutionary advantage over those that can’t, and have to learn through simple genetic selection of instinctive behaviour.

Hence, there isn’t any real reason why an AI should be given sentience on purpose. They are not meant to evolve, or to adapt to situations outside their world model.

In simple terms, I just want my self-driving car to bring me home safely, not to ponder on the quarrel it just had with my microwave about dating my dishwasher.

There isn’t any technical advantage in sentience for an AI; all the contrary, dedicating a non trivial amount of computational resources to self-generated goals, instead of the goals given by creators and users, seems an unwise commercial strategy.

Even in the case of AI personal assistants, including substitutes for human companionship, nursing and teaching, AGI seems more than adequate to cover those roles: general intelligence and a set of externally provided and immutable goals would require less computational resources and fulfil the requirements just as well, if not better, than a sentient AI.

The only possible reason for developing an AS as described in this article seems to be just for the sake of doing it; for example, for mere research and scientific purpose; or maybe, for interstellar exploration, where back-and-forth communication with Earth in order to adapt the goals of the mission to the environment found on other solar systems is impractical.

Conclusion

There isn’t any chance that an Artificial Sentience would wipe out humans just for the fun of it; in other words, p(doom) = 0 even if p(AS) = 1. Other risks (societal, occupational, legal, cultural) are far more real and serious, and we should focus on them.

Bibliography

- Dawkins, R. (2006). “The Selfish Gene.” Oxford University Press.

- Friston, Karl, Moran, Rosalyn J, Nagai, Yukie, Taniguchi, Tadahiro, Gomi, Hiroaki et al. (2021). “World model learning and inference.” Neural Networks, 144.

- Metzinger, T. (2009). “The ego tunnel: The science of the mind and the myth of the self.” Basic Books.

- Morin, Edgar (1977). “La nature de la nature.”

- Premack D, Woodruff G. (1978) “Chimpanzee theory of mind: Part I. Perception of causality and purpose in the child and chimpanzee.” Behavioral and Brain Sciences. 1(4):616–629. doi:10.1017/S0140525X00077050